01

Multi-provider support

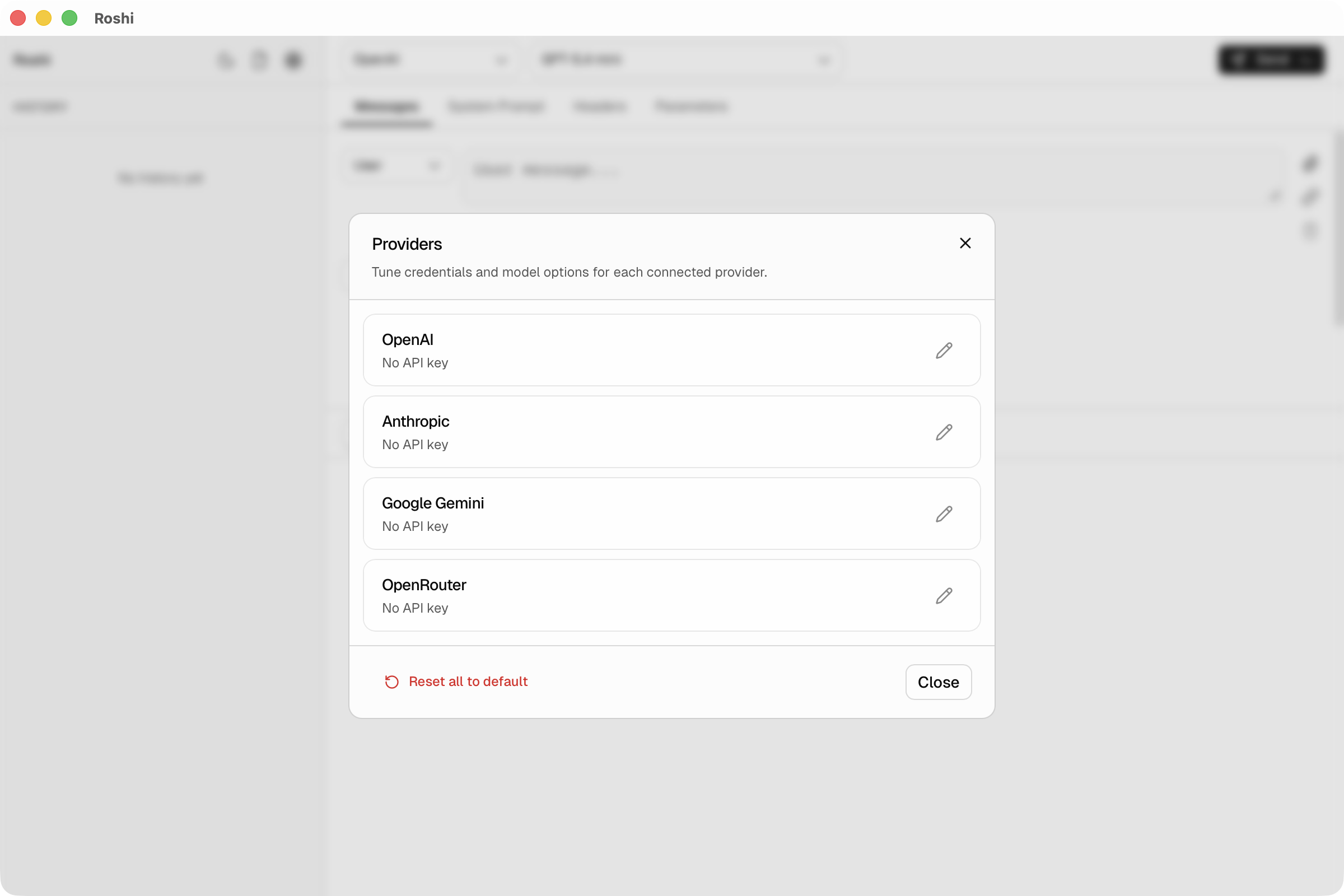

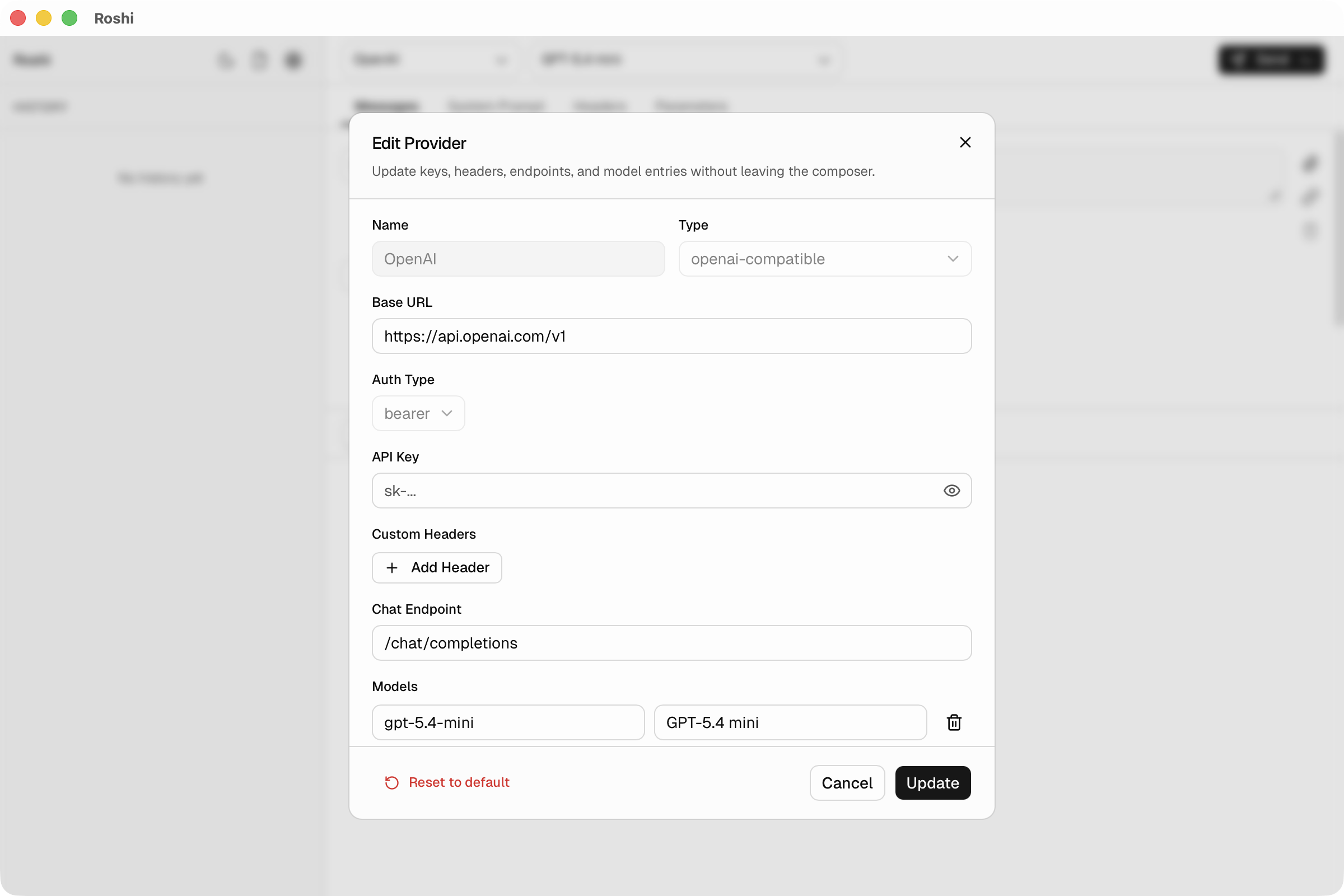

Built-in templates for OpenAI, Anthropic, Gemini, and OpenRouter. Add any OpenAI-compatible, Anthropic-compatible, or Gemini-compatible endpoint for internal or experimental infrastructure.

Local-first LLM API workbench

Stream responses, inspect payloads, manage providers, and generate code snippets — all from one local-first desktop app. No backend, no sign-up.

Features

01

Built-in templates for OpenAI, Anthropic, Gemini, and OpenRouter. Add any OpenAI-compatible, Anthropic-compatible, or Gemini-compatible endpoint for internal or experimental infrastructure.

02

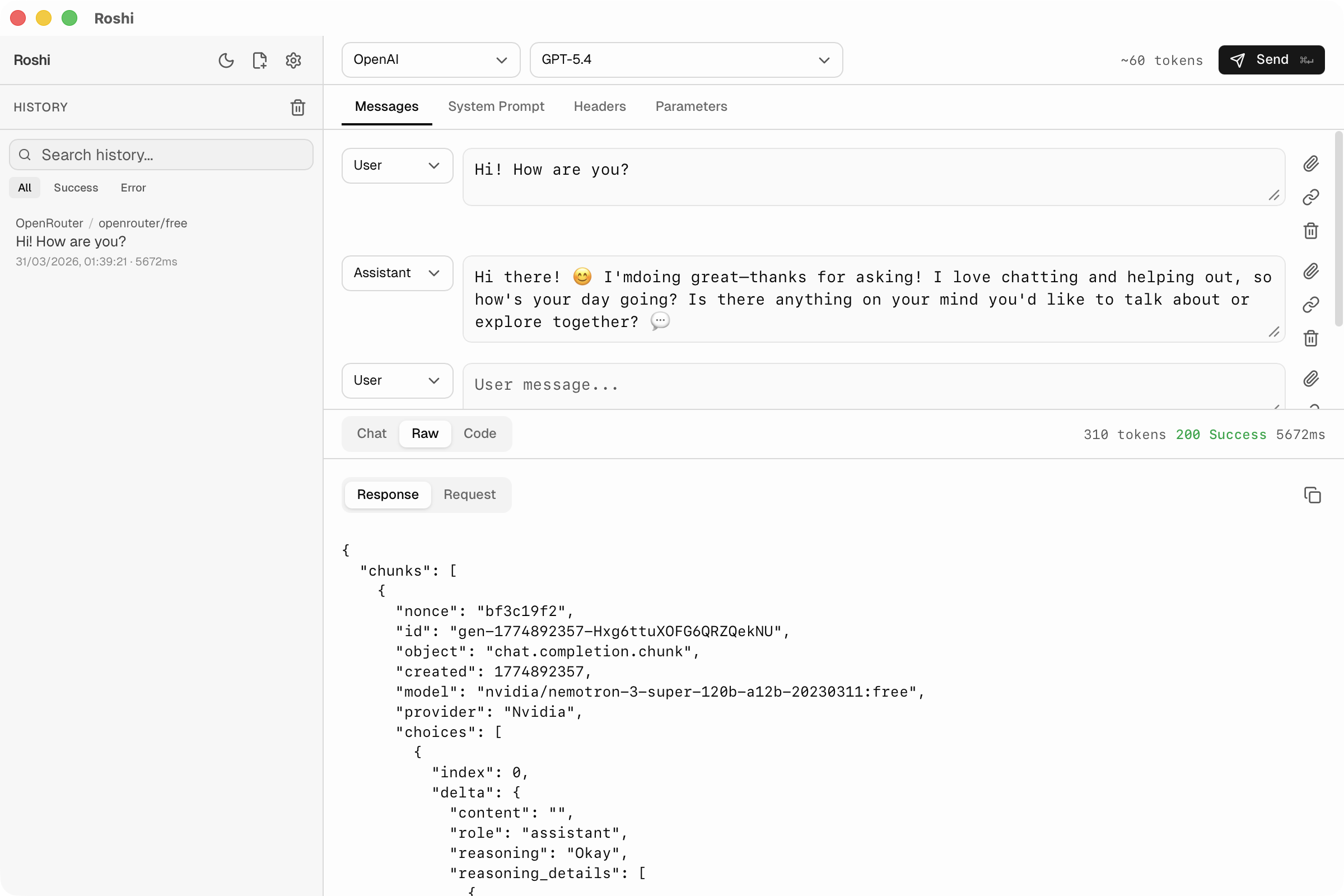

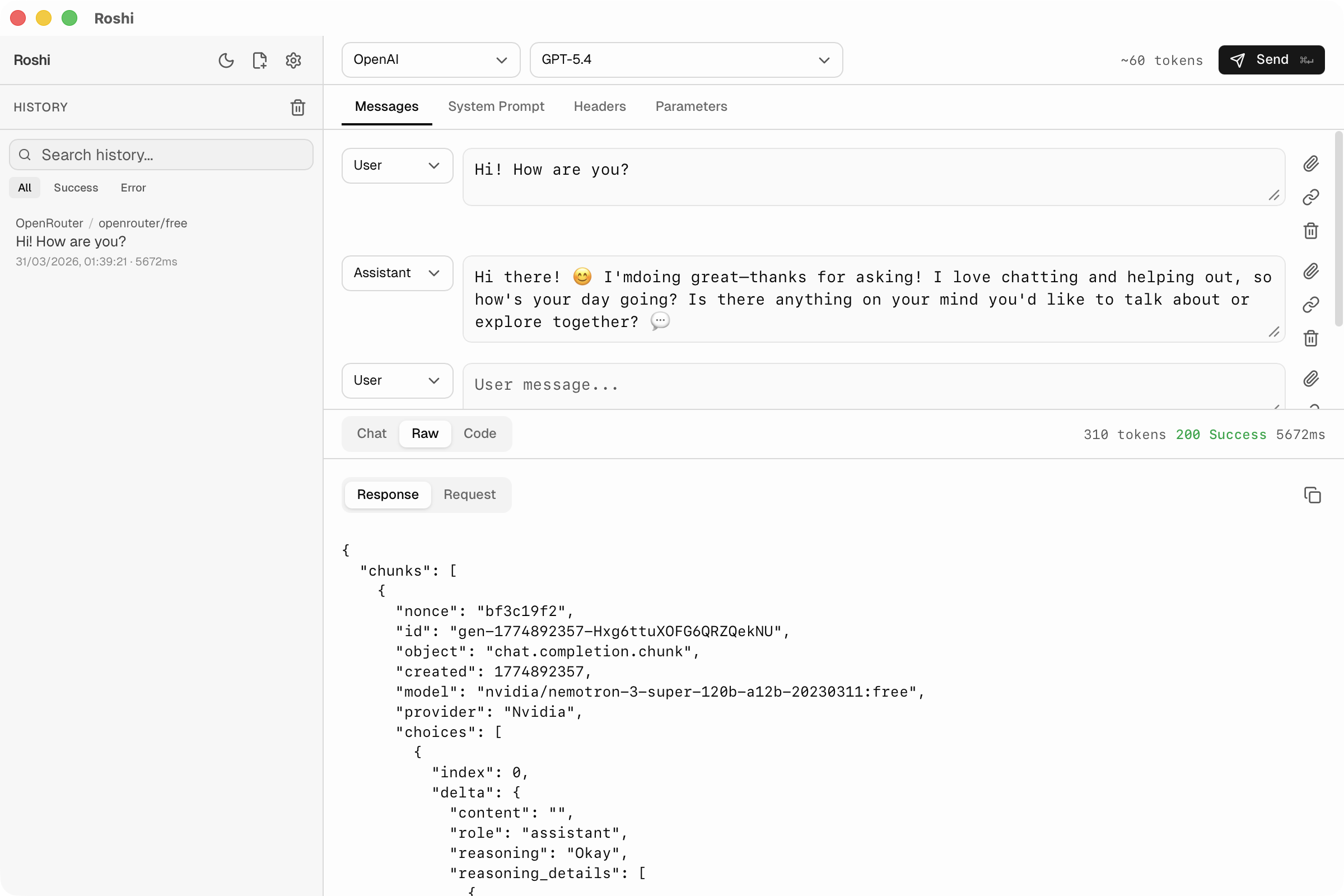

Watch responses arrive in real time. Inspect token flow, partial output, and final payloads in a purpose-built interface.

03

Every request and response stored locally. Search, replay, compare, and recover the exact payload from any previous run.

04

Client-only. API keys stay on your machine. Requests go directly to the provider — no hosted relay, no sign-up.

05

Export validated requests as cURL, Python, or JavaScript snippets. Move from exploration to implementation in one click.

06

Attach images, tune temperature and token limits, adjust headers, and work with the parameters engineers actually need.

Product

Workflow

Choose a built-in template or configure your own endpoint, key, custom headers, and model list.

Iterate on messages, parameters, and streaming to understand what the model does before writing integration code.

Export snippets, keep the run in history, and hand the exact working payload to your app.

Comparison

| Roshi | cURL / CLI | Postman | Provider playground | |

|---|---|---|---|---|

| Live streaming | ✓ | ✓ | — | ✓ |

| Multi-provider | ✓ | ✓ | ✓ | — |

| Local history | ✓ | — | ✓ | — |

| No account required | ✓ | ✓ | — | — |

| Code generation | ✓ | — | ✓ | — |

| LLM-specific UI | ✓ | — | — | ✓ |

| Keys stay local | ✓ | ✓ | — | — |

| Open source | ✓ | ✓ | — | — |

Security

Roshi is client-only. No sign-up, no hosted relay, no analytics in your provider traffic. Settings and history are stored locally.

What Roshi optimizes for

Download

Roshi is free and open source. Pay once on the Mac App Store for auto updates and to support future development.

Direct Download

Everything you need to test and debug LLM APIs.

Mac App Store

Support ongoing development and unlock future features.

FAQ

No. Roshi is client-only. Your machine talks directly to the provider. Secrets are stored locally.

OpenAI, Anthropic, Google Gemini, and OpenRouter out of the box. You can also add any OpenAI-compatible, Anthropic-compatible, or Gemini-compatible endpoint.

Yes. Streaming responses are first-class, and the composer supports multi-turn conversations with role-based messages.

Yes. Roshi generates cURL, Python, and JavaScript snippets from the request you have validated in the UI.

Roshi runs on macOS 12 (Monterey) and later, including both Apple Silicon and Intel Macs.

Not yet. Windows and Linux support are on the roadmap. Star the GitHub repository to follow progress.

Roshi is released under the MIT License. You can use, modify, and distribute it freely.

Download the macOS app, connect your providers, and start testing LLM APIs in a purpose-built workspace.